Introduction

A few months ago, a client asked me to take care of their Kubernetes cluster (hosted on AWS and GCP). In their opinion, the costs were exorbitantly high for relatively simple and lean websites. Sure, they had many visits, but nothing too excessive development-wise.

I kindly declined. Unfortunately, their situation is all too common these days: they hired developers accustomed to working that way, convinced that a system administrator is now unnecessary because "the cloud has infinite potential." They were used to considering optimization as secondary because "we have infinite power" (and this is already a spoiler for the ending).

Being open to dialogue and new experiences, they asked for my opinion on the matter. We talked for a while, and I explained that, in my view, for the type of setup they had (standard, with various replicas and variants, but primarily based on two platforms), it didn't make sense. I saw it as complicating things. An over-engineering of something simple. Like taking a cruise ship to cross a river.

They then asked me to create something simple that would serve as a development server and for backups, to understand what kind of solution I had in mind.

The Solution

So, I started building everything. I began with FreeBSD 13.2-RELEASE, but in the meantime, 14.0-RELEASE came out, so that’s the version I delivered.

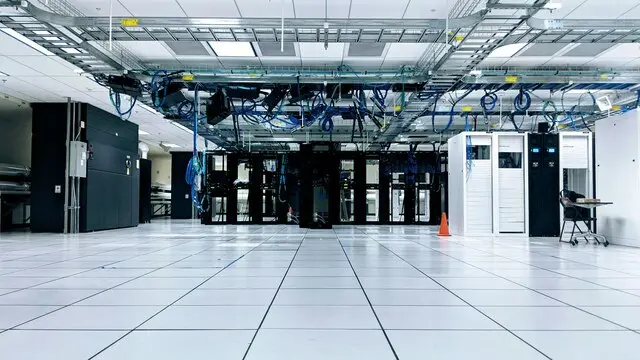

I installed the operating system on a physical server, leased from one of the main European providers. Benefiting from one of their auctions (good deals can be found on weekends), they found a sufficiently powerful machine, with 128GB of RAM, 2 NVMe drives of 1TB each, and two spinning disks of 2TB each for less than 100 euros per month. They also took another, less powerful one for additional backups and to back up the first one.

Implementation

I decided to keep the host as clean as possible and concentrated the services in jails (managed by BastilleBSD) and VMs. The machine was divided as follows:

- A series of bridges - to be used for different projects. Jails of the same project and/or type use the same bridge and can communicate with each other, sharing some resources (MariaDB, etc.).

- A bhyve VM with Alpine Linux - in my opinion, the best distribution for running Docker containers. Do we really need systemd just to launch Docker? They mainly use it as a pre-production test bench, connected via VPN to their company LAN. It is the core of their "online" development, i.e., outside their computers. It has 32GB of RAM, 200GB of disk (obviously bhyve is configured with NVMe drivers), and 4 cores assigned.

- A VNET jail with a reverse proxy (nginx) - they know how to modify virtual hosts and generate certificates with certbot, pointing to the underlying jails.

- A series of "empty" VNET jails, to be cloned, for each type of setup (they mainly have CMS based on WordPress and Laravel, so with all dependencies inside - nginx, php, redis, etc. except the databases).

- A VNET jail with MariaDB installed, to be cloned, to be attached to different projects as needed.

- zfs-autobackup performs local snapshots, keeping: one every 15 minutes for 3 hours, one per hour for 24 hours, one per day for 3 days.

Backups are also performed using zfs-autobackup and, in case of disaster recovery in rapid times, a zfs-send (and corresponding zfs-receive) every 10 minutes on another machine (the other, smaller one, also taken at auction), with the same bridges, firewall rules, BastilleBSD, and bhyve installed - ready to start in case of disaster. Being a test server, we didn't consider to implement a proper HA - at the moment, it wouldn't make sense.

They also have another job with zfs-autobackup that performs an additional backup on a server (Debian in their offices). Safe data, in my opinion, are those in storage under your b...ench.

I delivered everything to them and gave a brief course to the more experienced devs on how to manage things. No explanation on the Alpine Linux VM, but I showed them the jails, how to clone, configure, and manage them.

Real-world Testing

I didn't hear from them anymore. After a few weeks, one of the devs contacted me urgently because a junior unfortunately made a mistake and deleted an entire project from one of the jails. I explained that the local snapshots were restorable with a command, and he was thrilled. He restored both the development jail and the one with the database made two minutes before the "mishap" and they restarted immediately.

I realized that this event would change some of their procedures and criteria.

I hadn't heard from anyone for months. This morning, I received a call from their manager, whom I hadn't heard from since the beginning, and he told me how things had been going these months.

Lessons Learned

First, this person has good communication and commercial skills but little technical background. He is open-minded and tends to study carefully what is proposed to him. He doesn't discard any solution a priori, without having touched its pros and cons.

They had leased servers with cPanel and were inserting their content inside them. The devs who arrived a few years ago suggested making a technological transition, eliminating these "obsolete" servers and "outdated" methodologies, pushing everything to the cloud and containerizing everything. When we first talked, he told me how they were "lucky to make that transition because their load had increased enormously and the old servers probably wouldn't have handled the load", instead autoscaling saved them. I had some reservations about autoscaling without particular controls, but clearly, I cannot impose my choices on others.

To cut a long story short: seeing what happened with that junior dev's mistake (and the simplicity with which it was possible to restart immediately), they decided to increase the use of FreeBSD jails and reduce, at least on secondary loads, the use of their Cloud managed with Kubernetes. As they transitioned to jails, however, they noticed some slowdowns. These slowdowns worsened day by day. According to the devs, it would have been appropriate to go back to having, again, autoscaling ("we need moar powaaaaar!!!") but, fortunately, their boss decided to investigate carefully. They realized that these workloads (based on Laravel) were storing sessions on files. Over time, these millions of files (several gigabytes per day) slowed everything down because, for specific operations, Laravel scanned the entire directory. In other words, on the "cloud," they needed much more power than necessary (and much more disk space, but that was cheaper) to carry this load, which was, in fact, unnecessary. After realizing this, they moved the sessions to Redis. Needless to say, everything became extremely faster, even compared to the previous setup on Kubernetes and autoscaling.

At that point, it was clear that one of the problems with their setup is (as often happens) poor optimization. Today, there's a tendency to rush, "throw in" functions, features, libraries, plugins, etc. without considering the interactions and consequences. If it works, it's fine. Even if it increases computational complexity exponentially just to, for example, change the color of an icon (absurd example, but to give an idea).

They then started moving even the main Laravel workloads (thanks to the optimization implemented). At this point, they began moving some of the WordPress sites even though they were extremely concerned. In the cluster, every day, at fairly irregular intervals, the load would rise and everything would slow down until autoscaling started scaling up to the imposed limits. CPU at 100% on all containers, and the devs noticed that the load came from a series of "php" processes. Recreating the containers helped for some minutes, but did not solve the problem.

To their great surprise, all this did not happen on the FreeBSD jails. The load was significantly lower, without any of these spikes. Satisfied, they decided to use this as their final setup. One of the devs, however, wanted to get to the bottom of it and decided to run a test: he moved some of these WordPress sites to the Alpine VM, on Docker. At that point, the spikes resumed, saturating the CPU of the Alpine machine.

Without going into details, they eventually realized that there was a vulnerability in one (or more) of the many plugins installed on the WordPress sites, which was being exploited to inject a process, probably a cryptominer. The name given to the process was "php" - so the devs, not being system experts, did not worry about understanding better whether it was really php or another process pretending to be it. On FreeBSD, all this did not happen because the injected executable could not run - there was no Linux compatibility activated on the server.

Until then, they considered these (expensive) spikes as organic and did not worry too much about them. Paying to have their friendly intruders mine.

Conclusion

They asked me to help, as much as possible, to move other services to FreeBSD. It won't be easy, probably we will need to use bhyve a lot, but they decided that this is the platform they want to focus on in the coming years.

Undoubtedly, this is a success story of FreeBSD and, indirectly, of correct and careful management of one's resources. Too often today, there is the superficial belief that the cloud, with its "infinite" resources, is the solution to all problems. And that Kubernetes is the best solution for everything. I, on the other hand, have always believed that there is the right tool for everything. You can hammer a nail with a screwdriver, but it's not the most suitable and efficient tool.

Today they spend about 1/10 of what they used to spend before, they have more control over their data and the tools they use. Undoubtedly, all this was also caused by poor optimization and control by those who manage the infrastructure, but the question is: how often do people decide that, in the end, it is okay to spend more (especially if it is someone else's money) rather than go crazy for hours behind such a situation? While having defined and limited resources (albeit elevated) poses different problems - but of optimization. And in the age of energy and resource savings, it might be wise to give more importance to optimization.

Abundance led to waste.